Key Takeaways

- High-Volume, Low-Stakes Tasks: AI accelerates first drafts, meeting summaries, and sprint prep while judgment stays with the BA.

- Three Risk Categories: Stakeholder interpretation, requirements analysis, and compliance outputs are where AI and BA accountability diverge.

- Output Quality Signals: Cross-check against source notes, call out assumptions, and close the stakeholder confirmation loop.

- The Validation Standard: Reviewing AI output before it moves is the BA’s job.

- Team-Level Consistency: One unvalidated assumption puts everyone who built from it at risk.

The sprint review was supposed to be a checkpoint. Instead, it became a live debugging session. A stakeholder flagged a requirement that didn’t reflect what they’d said in the discovery meeting. The AI-generated summary looked correct, but the stakeholder knew otherwise. And the BA was the one fielding the question.

Output that looks finished and output that is finished are not the same thing. A well-written summary carries the same visual authority whether it accurately reflects stakeholder intent or subtly distorts it. There’s no yellow flag in the document. No asterisk. The text reads like work that’s been done, and that’s the problem.

AI does exactly what it’s designed to do, which is to produce coherent, plausible output based on patterns in the data it reads. It has no way of knowing whether that output is accurate, and it has no stake in whether it is. Understanding where the boundary sits is what makes the difference between using AI well and over-relying on it.

Problems like this rarely appear until a sprint review, an audit, or a conversation with a stakeholder who remembers exactly what they said. By the time they do, the project has already moved in the wrong direction, and catching it late costs more than slowing down early. BAs need a practical framework for navigating this risk, and what separates effective use from over-reliance is knowing where the line is and building the habits that make AI output worth trusting before it moves forward.

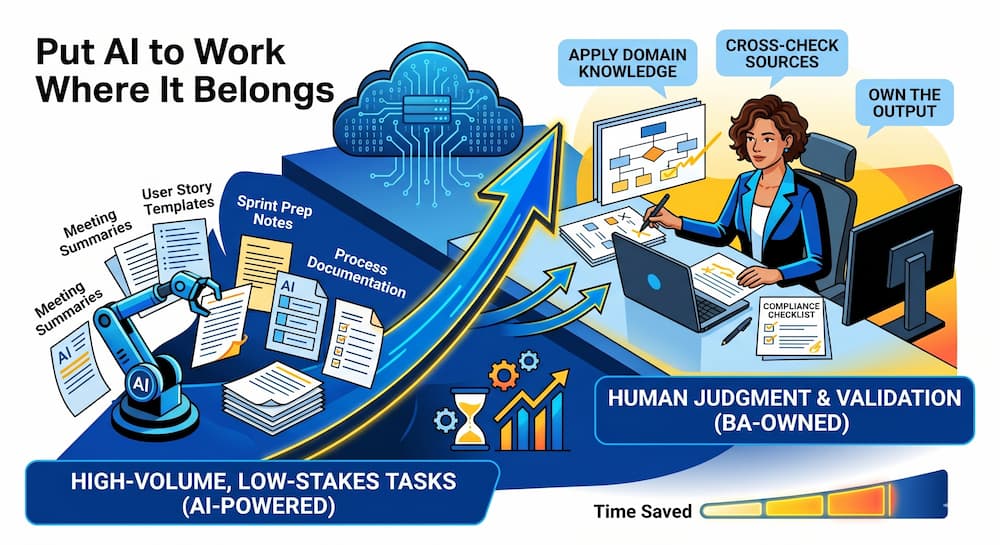

Put AI to Work Where It Belongs

There’s a reason AI has taken hold in BA workflows so quickly. For tasks that are high-volume, structurally repetitive, and low-stakes-if-wrong, AI cuts the time to produce a first draft. The output is a starting point that gets a BA closer to completion faster than starting from scratch.

AI speeds up the parts of BA work that are high-volume and structurally repetitive, freeing up time and attention for the judgment calls that actually define the role. Then, BAs apply their domain knowledge before anything leaves their hands.

The pattern has research behind it. According to the Federal Reserve Bank of St. Louis, workers using generative AI reported saving an average of 2.2 hours per week. Among workers who used generative AI every workday, roughly a third reported saving four or more hours. For BAs, those savings show up in exactly the kind of work AI handles well: volume tasks that are time-consuming but don’t require human judgment. A practical entry point is mapping your current workflow against those task types and letting AI take the first pass where the cost of being wrong is low.

AI handles tasks like these well:

- Meeting summaries: First-draft recaps of discovery sessions and sprint reviews, validated before sharing

- User story templates: Structural starting points shaped by domain knowledge before moving to the team

- Process documentation: Initial flow documentation refined against actual business rules

- Sprint prep notes: Agenda drafts and context summaries confirmed against the current project state

These tasks work because there’s a low cost to being wrong on the first pass. A meeting summary missing a nuance gets corrected before it goes anywhere. A user story template that needs restructuring gets rewritten. The BA is still in the loop. However, other categories carry a higher cost if the first pass is wrong.

Where the Judgment Call Is Yours

These three categories share something the low-stakes tasks don’t: the BA is accountable for the output whether AI produced it.

The difference runs deeper than risk level. AI can produce the output, but it can’t own accountability. The model only reads language and context for patterns. It doesn’t know what the BA knows, and it can’t be held responsible for what moves forward. Accountability stays with the BA.

Each category below carries a different kind of risk, but all three point to the same implication. Knowing the output came from AI doesn’t change who owns it.

Stakeholder Interpretation

The first is stakeholder interpretation. When AI summarizes what stakeholders “want” or “agreed to,” it’s reading language patterns rather than the business context the BA was present for. A stakeholder’s hesitation, shift in tone, or walked-back comment doesn’t make it into a transcript. A clean summary doesn’t mean the interpretation behind it is right.

Stakeholder intent is easy to approximate and hard to verify after the fact. Before this output moves, confirm it reflects reality, not just a plausible reading of the transcript.

Stakeholder Confirmation Checks

- Check the summary: Confirm it reflects what was said, not just what sounds plausible

- Cross-check sources: Verify conclusions against source notes, recordings, or stakeholder emails

- Confirm commitments: Verify any implied commitments directly with the stakeholder before the output moves

Each check closes the gap between what the AI read and what the BA witnessed.

Requirements Analysis

The second is requirements analysis. AI can flag potential incompleteness or contradictions in requirements, but knowing which findings matter requires context the model doesn’t have: current project constraints, team dynamics, and decisions that can turn a flagged item into a known, intentional tradeoff.

Not every flagged item is an open issue. Acting on one without checking first can send a project chasing a problem that no longer exists.

Requirements Validation Checks

- Confirm flagged items: Check each finding against current project constraints before acting

- Check for tradeoffs: Determine whether the finding reflects a known, intentional decision already made by the team

- Verify scope: Confirm the conclusion holds against decisions made outside the documents AI reviewed

Running these checks before acting keeps the project moving on decisions that were made, not findings that were already resolved.

Compliance

The third is compliance-related outputs. Requirements touching regulatory, audit, or legal territory carry liability that follows the person who signed off, not the tool that generated the draft. AI doesn’t know whether a draft meets compliance requirements, and the BA who approves it without a second look is accountable if it doesn’t.

Compliance outputs need a human checkpoint every time, without exception. The draft is a starting point, not a finished product.

Compliance Review Checks

- Verify against the standard: Confirm requirements against the actual compliance requirement, not just the draft

- Check sign-off authority: Verify the right person is approving before the output moves forward

- Document the review: Record what was checked, who confirmed it, and when

A compliance output without this trail isn’t just incomplete. It’s a liability the BA owns.

Knowing which categories carry risk is the first part. The next is knowing what a validated output looks like and building the habits that make that standard consistent across the team.

Know What Validated Output Requires

Validation has two parts: knowing what to question, and knowing what done looks like, so when an output is ready, BAs can move it forward knowing it reflects what happened. What that looks like in practice comes down to three concrete characteristics.

That standard applies regardless of where a BA is in their career. Though experience changes the scale and complexity of what you’re delivering, the validation habit remains the same.

A validated output has three concrete characteristics:

Validated Output Checks

- Cross-checked against source material: Confirmed against meeting notes, discovery session recordings, and stakeholder emails

- Explicit assumption callouts: Places where the BA has noted “I interpreted this as X” or “this conclusion rests on Y being true”

- Completed confirmation loop: Any conclusion touching intent, commitment, or priority has been confirmed directly with the stakeholder

All three together mean the output can move forward on what happened, and the BA can defend it if challenged.

A BA who completed the confirmation loop on the day of the discovery session stands on firmer ground than one who can only point to an AI summary. Documenting where the team used AI, what they validated, and how helps in audit situations, onboarding conversations, and late-sprint stakeholder challenges.

Individual validation matters, but one weak link undermines what the rest of the team built. When one BA validates consistently and another doesn’t, it disrupts the flow of the whole project.

Engaging in shared training builds deliberate practice, creating a team standard where any BA can defend an output that leaves the team, regardless of who produced it. A team that validates consistently builds the kind of credibility that holds up when a stakeholder pushes back.

Own Every Output Before the Handoff

AI handles the volume while judgment belongs to the BA. For BAs, understanding where AI’s limitations begin and knowing when to step in with consistent, defensible judgment is what separates using AI as a tool from letting it drive decisions that belong to you.

Validation keeps output moving forward based on what happened rather than approximating it. Checking your workflow against the low-stakes use cases where AI saves time and the three categories where a second look is necessary builds a practice you can stand behind at every handoff.

Watermark Learning’s BA training is where individuals and teams build the validation practices business analysis demands. Whether you’re sharpening your own judgment or raising the bar across your whole team, training gives you a concrete path to get everyone following the same standard.

Ready to sharpen your judgment and raise your team’s standard at the same time?

Build the validation skills that keep your judgment at the center of your BA practice, even as AI takes on more of the work.

New Horizons

New Horizons

Project Management Academy

Project Management Academy

Six Sigma Online

Six Sigma Online

TCM Security

TCM Security

TRACOM

TRACOM

Velopi

Velopi

Watermark Learning

Watermark Learning

Login

Login

New Horizons

New Horizons

Project Management Academy

Project Management Academy

Velopi

Velopi

Six Sigma Online

Six Sigma Online

Watermark Learning

Watermark Learning